Blog

Insights

Straightforward interfaces make navigation and actions more intuitive, reducing the learning curve for new users.

Matt Allison

Founder & CEO

Key Takeaways

The way brands track and manage their reputation has changed fundamentally—and most communications teams are still using yesterday's tools to fight today's battles.

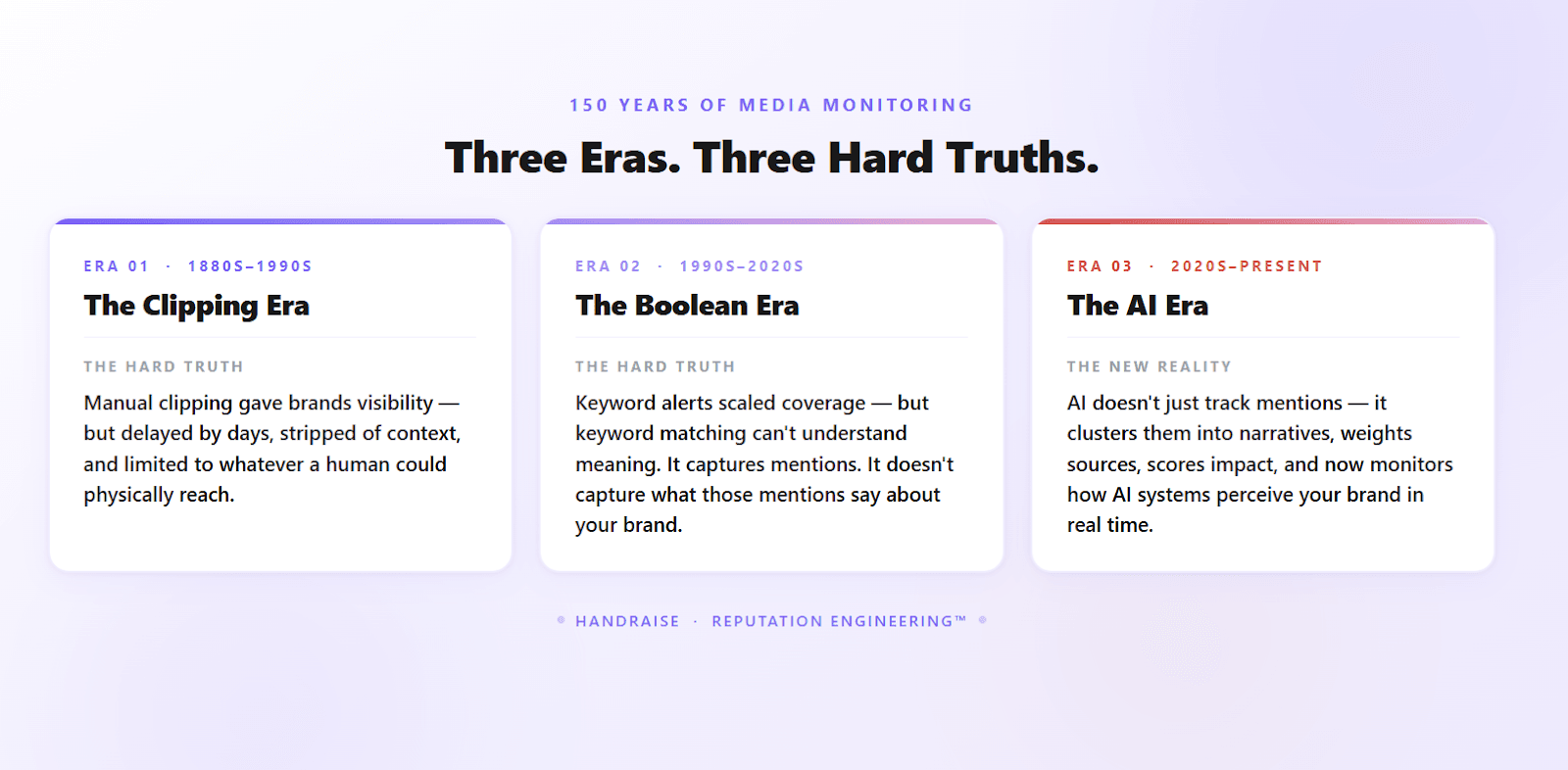

Media monitoring tools have evolved from manual newspaper clippings in the 1800s to AI-driven narrative intelligence platforms that analyze context, sentiment, and LLM brand perception in real time.

Legacy approaches—Boolean keyword searches, manual reporting cycles, and mention-counting dashboards—fail to capture the narrative layer where reputation is actually formed.

AI is now a primary audience: ChatGPT alone has surpassed 900 million weekly active users, and large language models increasingly shape how customers, investors, and journalists understand your brand.

Modern AI media monitoring moves the function from reactive data collection to proactive reputation engineering—giving communications leaders a strategic edge, not just a reporting tool.

If your media monitoring strategy still centers on counting mentions and generating quarterly reports, you're not just behind—you're operating blind in a world where narratives move in hours, not months.

Few enterprise functions have been transformed as quietly—and as completely—as media monitoring. What began as someone physically cutting articles out of newspapers has, over 150 years, evolved into something that would be unrecognizable to those early press clipping clerks. AI is now reshaping what media monitoring can do at a fundamental level—moving the function from reactive data collection to real-time narrative intelligence. Today, the modern media monitoring platform doesn't just watch for mentions—it analyzes narratives, tracks LLM brand perception, and surfaces signals that allow communications leaders to act before a story sets.

The evolution hasn't been linear, and it hasn't been smooth. Each wave of change left behind organizations still anchored to the last model. Understanding where media monitoring tools came from—and where they're going—is essential context for any VP or senior director of communications who wants to stay ahead of the reputation curve.

Where It All Began: The Press Clipping Era

The concept of media monitoring dates to the 1800s, when the only "media" worth tracking was print. Early press clipping services were exactly what they sound like: people hired to manually page through newspapers and physically cut out articles that referenced a client's name. Henry Romeike is widely credited with founding one of the first organized press clipping agencies, launching in London in 1881 before establishing a U.S. operation.

The process was entirely manual, slow, and narrow. Coverage was limited to publications that the agency could physically access. Delivery of clips was delayed by days or even weeks. And the analysis—if you could call it that—was purely volumetric. How many times was your brand mentioned? In which publications? That was the extent of the intelligence available.

By the early 20th century, broadcast media—first radio, then television—complicated the picture. Press clipping agencies expanded into broadcast monitoring, which required dedicated staff to record programs and transcribe relevant segments. The labor intensity grew substantially. The analytical depth did not.

These early methods weren't without value. For public figures and major brands, knowing you'd been mentioned in a prominent publication was meaningful. But the picture was fragmented, delayed, and stripped of context. There was no way to understand sentiment, narrative patterns, or the comparative standing of your brand against competitors.

How Did Digital Tools Change Media Monitoring?

The internet transformed the entire field of media monitoring in ways that seemed, at the time, to solve its most pressing limitations. Digital databases replaced physical archives. Online scanning replaced human page-turning. Keyword-based alerts—most famously Google Alerts—made it possible for any organization to track their brand name across a vast and growing digital landscape.

This era introduced what became the dominant paradigm for the next two decades: Boolean search. By stringing together keywords with logical operators, PR teams could theoretically filter the entire web for relevant mentions. The approach was faster than manual clipping and far broader in coverage. It also introduced a new set of problems that would frustrate practitioners for years.

Boolean searches generate enormous volumes of irrelevant results. They surface mentions without weighing context, prominence, or source quality. A passing reference to your company name buried in paragraph fourteen of an obscure blog carries the same flag as a front-page story in the Wall Street Journal. More significantly, keyword matching can't understand meaning. It captures mentions. It doesn't capture what those mentions actually say about you—or what narrative they're contributing to.

By the mid-2000s, a cottage industry of digital monitoring platforms emerged to address these limitations with varying degrees of success. Dashboard tools aggregated mentions, applied basic sentiment tags, and allowed users to filter by date, source, and keyword combination. These were improvements. But the underlying architecture remained the same: find the mention, report the mention, let a human analyst figure out what it means.

The result was a function that consumed enormous amounts of analyst time while producing reports that were often outdated before they reached a decision-maker's desk. It still takes many teams an entire quarter to compile and analyze coverage—by which point the narrative has already been shaped by outside forces.

The Rise of Digital Media Monitoring at Scale

As social media platforms exploded through the 2010s, the volume problem became acute. A brand crisis could unfold in hours. A product launch could generate thousands of mentions in minutes. The response was a generation of social listening platforms that added social channels to their coverage and built real-time dashboards. Share-of-voice metrics emerged. Sentiment analysis—still crude at this stage—attempted to categorize mentions as positive, negative, or neutral.

These tools were more capable than anything before them. They were also increasingly overwhelming. The more channels you monitored, the more noise you produced. Sentiment classifiers of this era were notoriously unreliable, frequently missing sarcasm, jargon, or context-dependent language. Compound that across millions of daily mentions, and communications teams were drowning in data they couldn't translate into action.

Era | Primary Method | Key Limitation | Time to Insight |

Press Clipping (1880s–1960s) | Manual physical cutting | Coverage gaps, extreme delay | Days to weeks |

Broadcast Monitoring (1960s–1990s) | Manual recording/transcription | Labor-intensive, incomplete | Days to weeks |

Digital/Boolean (1990s–2010s) | Keyword search, alert systems | High noise, no context | Hours to days |

Social Listening (2010s–2020s) | Platform APIs, dashboards | Volume overwhelm, weak sentiment | Hours (but noisy) |

AI Media Monitoring (2020s–present) | NLP, ML, narrative clustering | Still evolving | Real-time |

The manual labor burden was significant. As AI's role in PR has grown, the industry has increasingly recognized that most monitoring time went to data collection rather than analysis—a frustrating reality that compounded across every member of a communications team. The real problem wasn't a shortage of tools. It was that the tools available were designed to collect data, not derive meaning from it.

What Do AI-Powered Media Monitoring Tools Actually Do Differently?

Machine learning and natural language processing began changing the media monitoring landscape in ways that went beyond incremental improvement. The shift wasn't just about speed or scale—it was a fundamental change in what the tools could actually do.

AI-powered media monitoring tools can now:

Understand context and intent, not just keyword presence. An AI system can distinguish between "the company's earnings beat expectations" and "the company narrowly avoided a miss"—two mentions that share keywords but convey very different signals.

Apply brand prominence scoring, recognizing whether your brand is the subject of an article or a passing mention four paragraphs in.

Weight publications intelligently, with tiering based on domain authority, readership, and actual influence—so a Forbes feature registers very differently from a niche blog.

Track social amplification, incorporating share data to understand how much reach an article actually achieved.

Perform brand-centric sentiment analysis that reflects how coverage affects your brand's specific position, rather than applying a generic positive/negative tag.

The shift from keyword matching to semantic understanding is significant for enterprise communications teams. A Muck Rack survey of more than 1,000 PR professionals found that nine in ten say AI helps them work faster, and roughly eight in ten report improved work quality. But speed and quality improvements are table stakes. The more important shift is what AI enables at the analytical layer.

From Mentions to Narratives

Perhaps the most significant conceptual shift in modern AI media monitoring is the movement from tracking individual mentions to identifying narrative clusters. An isolated mention of your product is data. A pattern of related stories building a connected theme across 47 different publications over 14 days—that's a narrative. And narratives, not mentions, are what actually move public perception.

Consider the difference: legacy monitoring platforms could tell you your brand was mentioned 847 times last month. But they couldn't tell you that 600 of those mentions were contributing to a single emerging storyline about supply chain concerns—or that the remaining 247 were unrelated noise. That distinction is the difference between reactive crisis management and proactive narrative intelligence.

When similar articles are automatically grouped into narratives, communications leaders gain a fundamentally different view of their media landscape. Instead of scrolling through an undifferentiated list of mentions, they can see the three or four dominant storylines shaping their brand's reputation—and assess which of those require attention or amplification.

Real-Time Analysis vs. Quarterly Reports

The quarter-long analysis cycle that has plagued communications teams for decades is a direct consequence of the labor required by legacy monitoring platforms. When a human analyst has to manually review, tag, clean, and synthesize coverage before a report can be produced, the report will always lag behind the narrative.

AI-powered platforms eliminate most of that manual labor from the process. Coverage is automatically ingested, tagged, analyzed, and clustered the moment it's published. Rather than a quarterly report, teams have access to an evolving view of their media landscape—with impact scores that surface which narratives are gaining momentum and which are fading.

This isn't a marginal improvement. It changes what communications teams can do. They can react to emerging narratives in real time rather than months after the fact. They can assess a campaign's effectiveness while it's still running. They can identify a reputational risk before it becomes a crisis.

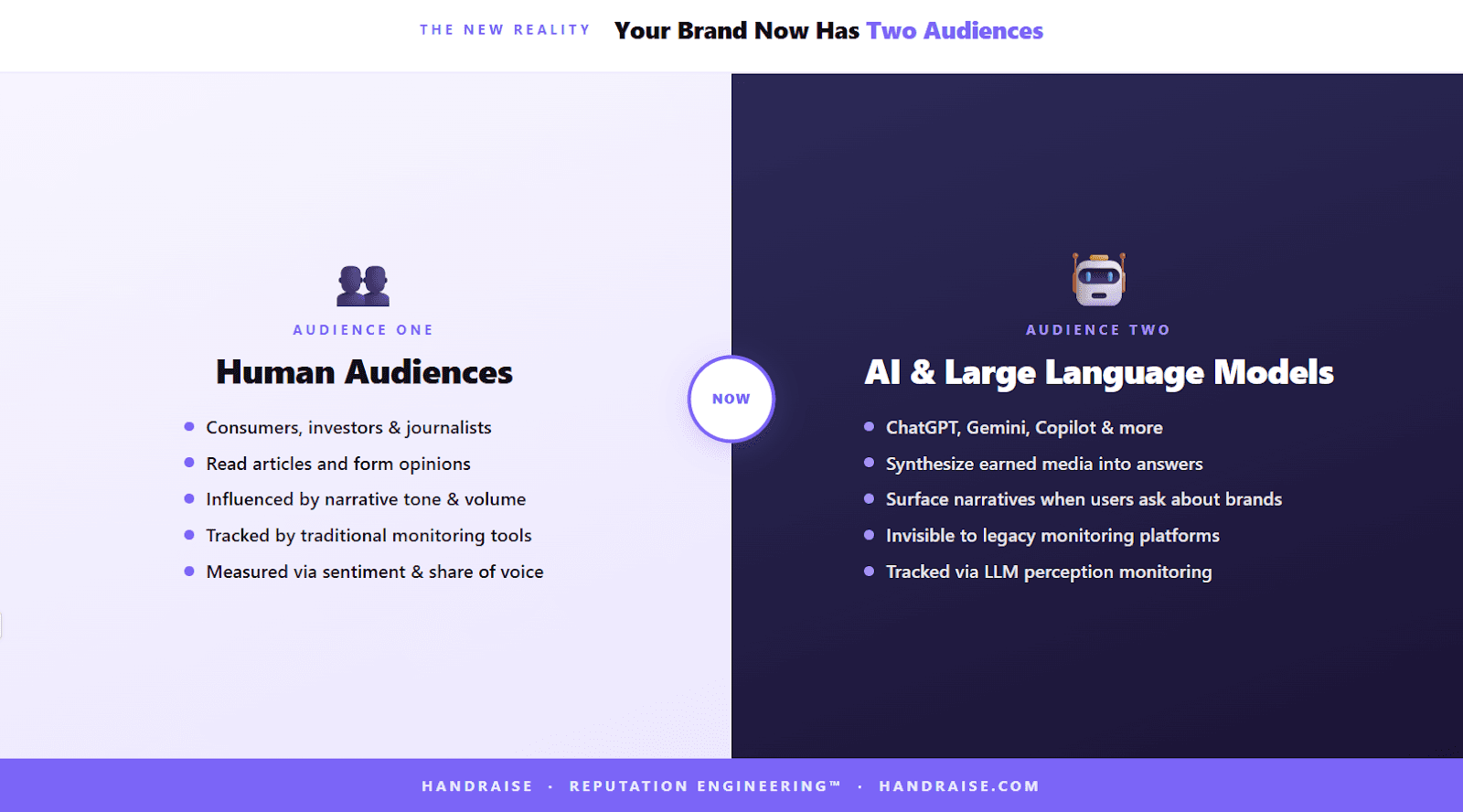

What Happens When AI Itself Becomes the Audience?

Here's where the evolution of modern media monitoring takes a turn that most organizations haven't fully processed yet.

For most of media monitoring's history, "audiences" meant humans: consumers, journalists, investors, policymakers. The goal was to understand how human audiences perceived your brand through coverage, and to influence that perception through strategic communications.

That model is still valid—but it's now incomplete. Large language models have become a primary channel through which people discover information, evaluate brands, and form opinions. ChatGPT alone now surpasses 900 million weekly active users, and similar AI-powered search tools are embedded in Google, Microsoft Bing, and dozens of other platforms. When someone asks an AI assistant about your industry, your competitors, or your company, the response they receive is shaped by a synthesis of the earned media landscape—including coverage your monitoring tools are already tracking.

This creates a new imperative: understanding not just what traditional audiences think about your brand, but how LLMs represent your brand when someone queries them. An AI assistant that consistently characterizes your company as under investigation, or consistently fails to mention you in relevant category searches, is a reputational problem that platforms built for a pre-AI world were never designed to detect.

Why LLM Perception Is a Communications Priority

LLMs synthesize information from earned media, Wikipedia, social platforms, and a vast range of digital sources. Their characterization of any given brand reflects—to a significant extent—the narrative ecosystem that surrounds it. If the dominant media narrative about your organization emphasizes financial instability, that's what an LLM is likely to surface when asked about your company.

This means that the work of narrative intelligence directly influences LLM perception. A brand that proactively shapes the narrative clusters defining its media coverage isn't just managing its reputation with human audiences—it's influencing the inputs that AI systems draw upon when generating responses about that brand.

The reverse is equally true. A brand that ignores the narrative layer, allowing external forces to define its media storylines, will find those narratives reflected back in AI-generated responses it has no visibility into and no mechanism to address.

Traditional Media Monitoring | AI-Powered Narrative Intelligence |

Tracks individual mentions | Clusters coverage into narratives |

Keyword/Boolean-based | Semantic NLP analysis |

Delayed reporting cycles | Real-time impact scoring |

Generic positive/negative sentiment | Brand-centric sentiment analysis |

Publication lists | Tiered source authority weighting |

Human audience focus only | Monitors LLM brand perception |

Share of voice (static) | Dynamic competitive share of voice |

What Should You Look for in a Modern AI Monitoring Platform?

For enterprise communications leaders evaluating platforms today, the benchmark has shifted significantly. The questions worth asking go beyond coverage breadth and reporting functionality.

Does the platform understand narratives, not just mentions? The ability to automatically group related coverage into thematic clusters—and to track those clusters over time—is among the most meaningful differentiators in today's market. A list of mentions is data. Three dominant narrative themes shaping your brand's reputation this week is intelligence.

How does sentiment analysis work? Generic positive/negative/neutral tagging is table stakes. The more valuable capability is brand-centric sentiment analysis—understanding how coverage positions your brand specifically, not just whether an article's overall tone is favorable.

How are publications weighted? Not all coverage is equal. A system that treats a passing mention in a low-authority blog the same as a feature story in a tier-one business publication will produce misleading impact scores. Look for tiered source weighting based on readership and domain authority.

What's the competitive share-of-voice methodology? Static snapshots have limited strategic value. Dynamic competitive benchmarking—updated continuously and segmented by narrative theme—gives communications leaders a meaningful view of where they're winning and losing relative to competitors.

Does the platform track LLM perception? Understanding how AI systems characterize your brand—and what media inputs drive that characterization—is becoming a foundational element of modern PR analytics. Platforms that don't address this dimension are already a step behind.

Your Questions About Media Monitoring Tools, Answered

What is the difference between traditional media monitoring and AI media monitoring?

Traditional media monitoring tools rely on keyword searches and Boolean logic to identify brand mentions, then surface those mentions in a dashboard or report. AI media monitoring goes further by analyzing the context, prominence, and sentiment of coverage—and clustering related articles into narrative themes. This shifts the function from mention collection to narrative intelligence, giving teams insight into what stories are forming around their brand, not just how often their name appears.

How does AI handle sentiment analysis differently than older platforms?

Advanced AI media monitoring platforms apply natural language processing to understand sentiment in context—distinguishing sarcasm, industry jargon, and brand-specific framing that basic sentiment tools miss. The most sophisticated platforms offer brand-centric sentiment analysis, which evaluates how coverage positions a specific brand rather than applying a generic tone assessment to the article as a whole.

Why should communications leaders care about LLM monitoring?

Large language models are now a primary channel through which audiences discover and evaluate brands. ChatGPT alone processes billions of queries each month—and that's one platform among many. The way LLMs characterize a brand has direct implications for reputation, competitive positioning, and even sales. Monitoring LLM perception—and understanding which narrative threads are influencing it—is a natural extension of the media intelligence function for enterprise communications teams.

How much time can modern AI monitoring platforms save communications teams?

The manual labor involved in traditional monitoring—data cleaning, report compilation, mention review—can consume the equivalent of an entire quarter before a useful analysis reaches a decision-maker. AI platforms automate the majority of this process, compressing the cycle from months to real time. Communications teams that have made the transition report reclaiming significant analyst hours that can be redirected toward strategic work.

The Next Chapter: Engineering Your Reputation with AI

The evolution from paper clippings to AI-powered intelligence represents more than a series of technological upgrades. It reflects a fundamental change in what the communications function is capable of doing—and what enterprise leaders should expect from it.

The teams that will lead on reputation over the next decade aren't the ones tracking the most mentions. They're the ones who understand the narratives being built around their brands, can act on that intelligence in real time, and have visibility into how both human and AI audiences are interpreting their story. That means moving beyond platforms that simply count and report, toward intelligence that clusters, contextualizes, and helps shape the narrative before it hardens.

The entire arc of this evolution—from Henry Romeike's scissors to Boolean alerts to AI narrative clustering—has been pointing toward one thing: giving communications leaders the intelligence they need to engineer their reputation, not just observe it.

That's the shift Handraise was built to enable. From patented narrative clustering to brand-centric sentiment analysis to LLM impact monitoring, the platform was designed from the ground up to address what the legacy tools were never built for. If you're ready to move from monitoring to Reputation Engineering™, book a demo with Handraise and see what modern narrative intelligence looks like in practice.

Matt Allison

Founder & CEO

Share